By the time he was 40, Franklin Foer had led one of DC’s most enduring political magazines not once but twice. Two years after resigning as editor of the New Republic in 2010, he was lured back by a new owner, Facebook multimillionaire Chris Hughes, who seemed to share Foer’s idealism about journalism. But Hughes’s technocratic vision—and hope that the internet would help the magazine turn a profit—shared little with his editor’s “moralistic and romantic” goals, as Foer puts it. In late 2104, he was fired, provoking a walkout by much of his staff.

In Foer’s new book, World Without Mind, the Atlantic national correspondent offers his story as a warning about the tech sector’s slide from digital dreamers to monopolists. By turns conservative and startlingly radical, Foer argues that Silicon Valley needs to be reined in before it costs us everything dear to us as a culture. Washingtonian talked to him at his home in upper Northwest.

What happened to the New Republic is a small portion of your book, but it’s hard not to read it as a poison-pen letter to the internet. Was a measure of this project just your wish to tell your side of that story?

It’s more that I care. The world I inhabited for so long and my way of living feel profoundly threatened. So yeah, it’s personal. Rather than sitting around wringing my hands, I decided to write a manifesto. Humans and machines are merging right now. I’d like it if human beings came out ahead.

But as you point out, we’ve been merging for a long time.

But we’re not merging with a hammer or farm machinery—we’re merging with technologies that shape the way we think. We’re on the brink of technology that will be implanted in us. Mark Zuckerberg talks about telepathy, and Elon Musk has invested in trying to create a brain-machine interface. We can pretend that a profound shift in human evolution isn’t occurring, or we can think about it and try to be intentional.

What does that entail?

As humans, we should make better choices. It’s hard because these processes are so seductive. I use Amazon more than I’m proud to admit. At the same time, I’m concerned about Amazon’s size and its power. There are policy measures we can take, too. We’ve fallen into such a libertarian rabbit hole that we cease to use policy to try to shape the economy to square with our values. We should consider breaking up some of the big technology companies to dissipate power.

These new tech monopolies are different, though, than the single-industry corporations our antitrust law was designed to stop. We can’t say to Amazon, “You sell books—you can’t also sell food.”

We think about these things in terms of prices and protecting consumers. On those issues, these companies are amazing. But that shouldn’t be the only measure, and in American history it hasn’t always been the only measure. We didn’t use to worship efficiency as the highest good. We worried about democracy and creating good citizens and that people read things that allowed them to make good democratic decisions.

There is a sense, though, that Google and Facebook are attempting to monopolize the flow of information—what you call the knowledge monopoly.

Well, it’s also a more traditional monopoly on advertising. I saw a scary number yesterday. Facebook and Google captured 99 percent of digital-advertising growth. That left 1 percent for the producers of, you know, actual news and ideas. But they’ve done it in part by deflating the market for information, by making everything free to their users. By doing so, they get to be the gateway to all that free stuff. Then, because they can furnish the most data about us, the reader, they have come to dominate the advertising business.

Your father, Albert Foer, a lawyer here in Washington, has been concerned with antitrust for years, and he founded the American Antitrust Institute. How much of your thinking is influenced by him?

Antitrust is having a revival—the Democrats just made it a plank of their economic plan. My dad has been in the fight against monopoly since it wasn’t so cool. I’m sure I’ve turned to the subject to please him, though I think he might consider some of my arguments a little flamethrowing for his tastes.

Even if policy breaks up these monopolies, how do we affect people’s choices about what they read?

It’s worth thinking creatively about ways state policy can actually subsidize certain media that we decide are more healthy. That doesn’t mean you promote the New York Times over the New York Post, but you may look at ways we can give newspapers in general a fairer hand in the competition.

One of your targets is Amazon and Jeff Bezos. But he did rescue the Washington Post and back a subscription pay wall, which you offer as a solution for journalism’s woes.

I think there is a journalistic myopia. If a white knight saves one of our institutions, we tend to ignore their other blemishes. I think it’s possible to say, ‘Thank you, Jeff Bezos, for saving the Post,’ but I hope the reporters at the Post think through the possible implications. He may be their savior, but he’s not necessarily everyone else’s.

But hasn’t the internet kept its promise to democratize debate?

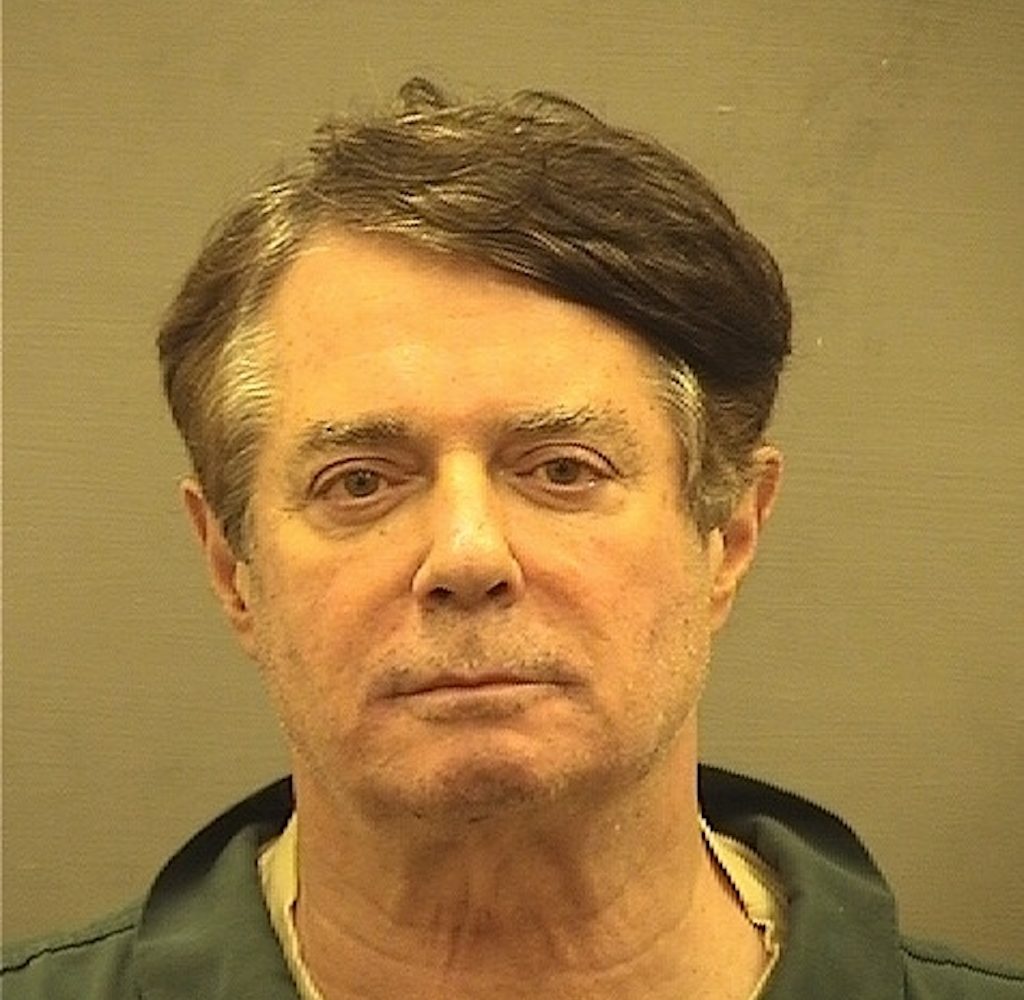

Yes and no. There is a bona fide democratization that’s happened because of social media. People have access to microphones that they didn’t have before, but it’s come at the expense of authority and traditional gatekeepers. We have the democratization of opinion, but we have the disappearance of any common accepted basis for fact. What’s more important for the functioning of democracy? The group that happened to be best connected to these new forms of media elected an authoritarian demagogue as President of the United States.

Are you saying Trump couldn’t have been elected without the internet?

Yes. I think the media of the 1960s or ’70s, which was so powerful, would not have permitted an outlier like Trump to be elected. There’s a lot not to be nostalgic about in that world: That media also gave its assent initially to a war in Vietnam, and it was undemocratic in every sort of way, yet it produced a much healthier democratic culture.

You say the internet companies are forcing us all to conform. What do you mean?

What I say is that they’re collectivist in their outlook. They want everyone to be part of one virtual organism, cells interacting in this body that is Facebook or Google. That’s a beautiful vision, but it’s not how it works out when one big firm runs the system for profit. We’re not cells; we’re lab rats in their experiment. They’re trying to influence our decision-making, nudge us in various directions about what to buy, what to read.

In the book, you mention Amazon offering books based on our previous purchases.

A more powerful example is Facebook privileging video over the written word in our news feeds. It’s what they call choice architecture. They are steering us to watch more things so that Facebook can sell advertising.

Aren’t they simply putting the ads where people are clicking?

If you’re presented with choices that steer you toward your worst instincts, that’s what you’ll choose. If I’m presented with Snickers bars, I won’t necessarily seek out kale. We think of Netflix as a great personalization machine. It understands how you love French midcentury cinema and British murder mysteries, so examples of those pop up in your personalization engine. But you’re also getting fed a lot of Netflix content. These companies create this illusion that you’re being catered to, but really you’re getting what makes them money.

The flip side of being catered to is the sense that we’re always being watched.

Right, and it’s a painful and inhibiting thing. Elites in Washington these days have to fear that they’re hacked by someone, whether by the Russians or domestic opponents. You start to inhibit yourself. You start to clam up or become an inauthentic person.

Yet for most of us, the level of privacy being invaded is pretty superficial. It goes back to the difference in the NSA looking at phone data versus listening to our calls.

I actually think the NSA’s surveillance is more superficial than Silicon Valley’s. There’s a level of legal regulation there. When Facebook creates this portrait of you to sell to advertisers, you lose control in some fundamental way. They use it to make you consume things and read things.

Isn’t there a danger, though, in equating our consumer choices with ourselves? My toothpaste doesn’t say much about me.

But they know a lot more—where you’ve been and who you’ve been interacting with. Google boasts about knowing where you’ve been all day. As privacy slowly collapses, it doesn’t seem like that big a deal, but if an employer has access to a slew of data about your lifestyle, it may decide not to employ you because you’re going to cause its health care to bump up, or because you have bad moral character. As things become more automated, the commercial decisions made about us potentially become more arbitrary and specific. That’s the danger.

Can’t we all just choose to walk away from our machines?

Perhaps, but it’s more that we have to con-strain technology. Maybe we’ll all be better off in a world of algorithms and chips implanted in our brain and ditching our bodies for some virtual reality. But before we commit to these steps, let’s consider the consequences. We need to hit pause and think about the society we’re creating.

This article appears in the September 2017 issue of Washingtonian.